Research news

How to create a spotlight of sound with LEGO-like bricks

By: Neil Vowles

Last updated: Friday, 10 May 2019

Academics have created devices capable of manipulating sound in the same way as light – creating exciting new opportunities in entertainment and public communication.

Researchers at the universities of Sussex and Bristol have unveiled how the practical laws used to design optical systems can also be applied to sound through acoustic metamaterials at the ACM CHI Conference on Human Factors in Computing Systems.

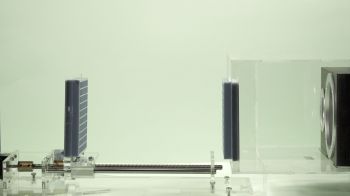

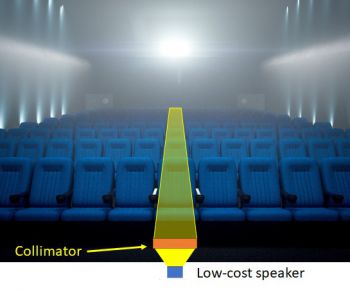

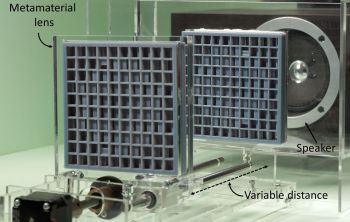

The researchers have demonstrated the first dynamic metamaterial device with the zoom objective of a varifocal for sound. The project team have also built a collimator, capable of transmitting sound as a directional beam from a standard speaker.

The breakthrough has the potential to revolutionise the entertainment industry as well as many aspects of communication in public life by creating directional speakers that can reach out to an individual in a crowd.

Dr Gianluca Memoli, Lecturer in Novel Interfaces and Interactions at the University of Sussex, who leads this research, said: “Acoustic metamaterials are normal materials, like plastic or paper or wood or rubber, but engineered so that their internal geometry sculpts the sound going through. The idea of acoustic lenses has been around since the 1960s and acoustic holograms are starting to appear for ultrasound applications, but this is the first time that sound systems with lenses of practical sizes, similar to those used for light, have been explored.”

The technology takes everyday materials like glass, wood and 3D printer plastic and engineers them to control, direct, and manipulate waves in uncommon ways, transforming metasurfaces into behaving like converging lenses for sound.

The research team believe the technology opens up a multitude of opportunities, including the ability to use sound lens as a receiver to pinpoint alarming noises, such as within a machine to identify a fault or within selected areas of a house to distinguish a burglar breaking a window from a dog barking in the background.

In creating an acoustic telescope, the technology has the capability of zooming in to a single person in a crowd to either deliver or receive acoustic messages.

The academics also believe that the technology can help bringing truly 360 degree sound coverage ensuring that every member of an audience has the optimal listening experience.

Letizia Chisari, who contributed to this work during a summer placement at Sussex, added: “In the future, acoustic metamaterials may change the way we deliver sound in concerts and theatres, making sure that everyone really gets the sound they paid for. We are developing sound capability that could bring even greater intimacy with sound than headphones, without the need for headphones.”

The new technology has distinctive advantages over previous advances in sound technology.

Surround sound systems in cinemas and home theatres have a limited aural sweet spot restricted to a narrow listening area while audio spotlights are expensive and are currently unable to transmit high-quality sounds.

Acoustic lenses in ultrasonic transducers and some high-end home audio are designed to be much larger than the wavelength, meaning their use is confined to the higher part of the acoustic spectrum.

Metamaterials are smaller, cheaper and easier to manufacture than phased arrays and can even be fabricated in recyclable materials. Metamaterial devices also lead to less aberrations than speaker arrays, even over limited bandwidths.

Jonathan Eccles, a Computer Sciences undergraduate student at the University of Sussex, said: “Using a single speaker, we will be able to deliver alarms to people moving in the street, like in the movie Minority Report. Using a single microphone, we will be able to listen to small parts of a machinery to decide everything is working fine. Our prototypes, while simple, lower the access threshold to designing novel sound experiences: devices based on acoustic metamaterials will lead to new ways of delivering, experiencing and even thinking of sound.”

The paper VARI_SOUND: a vari-focal lens for sound was presented at the ACM CHI Conference on Human Factors in Computing Systems (CHI 2019) in Glasgow on Monday (6 May).

This research is funded by the Engineering and Physical Sciences Research Council (EPSRC-UKRI) through fellowship “AURORA” (grant EP/S001832/1) and by the Royal Academy of Engineering through the Chair in Emerging Technologies scheme (grant CiET1718/14).

Find out more about Dr Memoli’s work and other exciting research projects in Informatics here.